How to confidently compare the integrity of files in two large folders

October 24, 2018

How do you confidently compare two folders in the year 2018? I expected there to be an easier answer to this question.

My team sometimes needs to move a large number of files between storage systems, like different cloud providers. Since the files are crucial to the business, it’s really important to make sure that the move is completed well and the files are intact, that they all really got copied, and their contents hasn’t changed. The “cloud providers” make this part fun, as many of them do tricks in the file system to not actually cache the content of the file locally until really needed, and some of them don’t preserve metadata like dates accurately.

I was in a situation where I had the same folder supposedly synced in two cloud providers, and wanted to make sure that it really checks out, i.e that there are the same files in the folders, and their content is intact. Since there were tens of thousands of files in many folders, manual comparison was out of the question.

What I expected to do is to just fire up a tool like Kaleidoscope, drag the two folders on to it, and be done with it. This didn’t work for the reason I mentioned before—Kaleidoscope compares all of the attributes of the files, including dates. If the dates are different, Kaleidoscope shows that the files are different, even if their contents is otherwise the same.

What I ended up doing is baking up my own method, which is slightly overkill, but it does give me full confidence that the files really are intact. So here’s how I did it. Maybe it’s useful to you too.

Step 1: sync both folders to be “available offline”

Obvious step, but I mention it just in case. You need to have the full contents of all files locally available. Which means you need enough local storage to keep the files basically twice for the period you are doing this comparison. (Well, as I discuss below, you could do it piecemeal too, but for simplicity, let’s stick with this.)

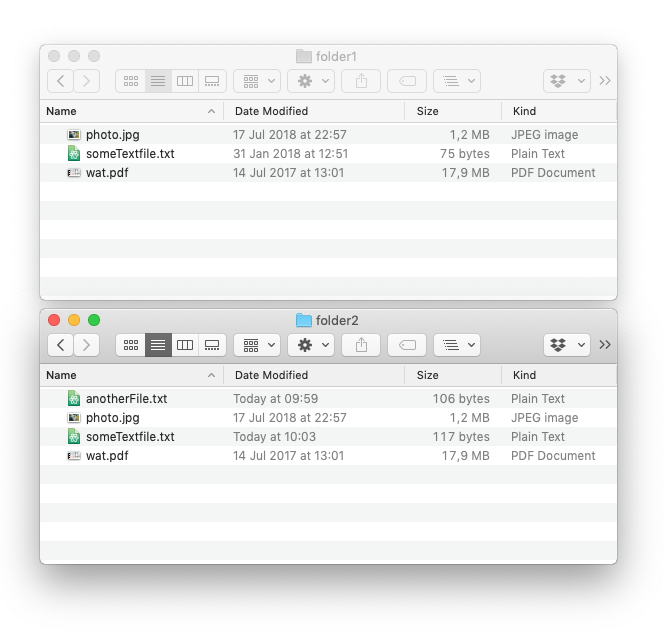

For the following demo, here are two folders I’ll be comparing. This is obviously a simple example, but it scales well to large folders, thousands of files and many gigabytes.

Step 2: generate a listing of all the files

This is easy. In Terminal, change to both folders separately, and run the following command.

find . -type f > ../folder1.tsv

This just generates a listing of files, which looks like this.

./someTextfile.txt

./.DS_Store

./wat.pdf

./photo.jpg

This command runs very fast also in large folders. If there are folders in your folders, these will all be reflected in the file paths.

Step 3: checksum the files

What you do now is to take the list of files, and checksum their contents. For Unix gurus, this is a one-line command, but I ended up writing a Python script for it, which is here.

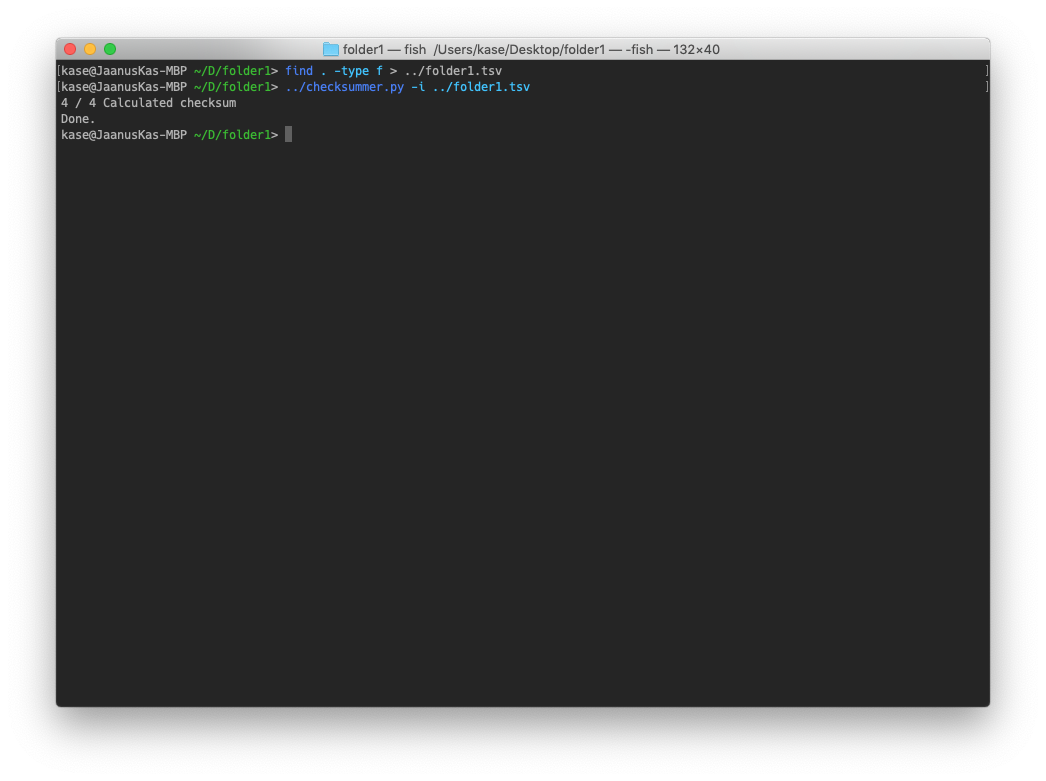

You run the script, giving it the output file from previous step. You should be in the same folder where you ran the previous command, so the whole session might look something like this.

When you open the output file (which has the same name and location as input file, but with “-out” appended to its name), you’ll see this.

You repeat steps 2 and 3 for each folder that you would like to compare with one another. Note that you don’t have to run them at the same time: if you’re short of storage and can only afford to keep one full copy of the files locally, you first sync it from one provider, run the above steps, then sync it from another, and so on. Just observe that the comparison results will reflect the folder contents at the exact moment when you run it.

Step 4: compare the outputs

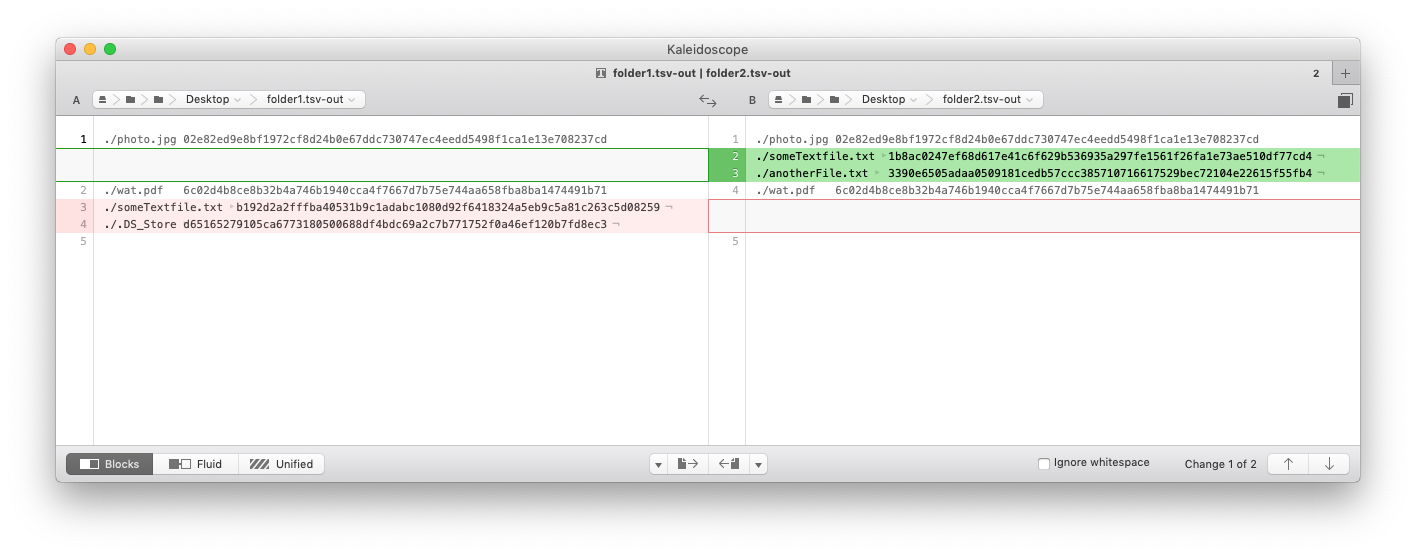

So, finally. You have two output files from the above operations. What you can do now is to compare them with any standard diffing tool, and note the differences. Here’s what I get when I do it for my simple demo with Kaleidoscope.

Here’s what I see in these results.

photo.jpgandwat.pdfhaven’t changed..DS_Storeexists only infolder1, but not infolder2.anotherFile.txtexists only infolder2, but notfolder1.someTextfile.txtexists in both places, but the checksum is different. Meaning that the contents has probably changed. (Which is true - I edited the file for the purposes of this demo to show this case.)

You might ask, why is the position of someTextfile.txt different in the listing? This is because the output sorting is done by checksum and not file path. (If you look at the checksum values, you will note that they are indeed sorted.) This is because we can’t rely on file list sorting - output of the file listing isn’t necessarily sorted alphabetically. We could sort it by file name ourselves, but sorting by checksum has another interesting property that it will group copies of the same file across all different folders together, so you can spot that. (In my case, since we work a bit with Framer prototypes, Framer boilerplate files were one such case.)

Based on this, you can then decide how to sync up the folders. You could copy over the missing files, or edit the changed files so that they are on both sides, and re-run steps 2 through 4 until you are satisfied with the results.

Final thoughts

I completed my task successfully with the above steps: I was able to make sure that indeed, the working files were correctly synced between my cloud providers.

I noted interesting differences between our cloud storage providers. Some of them preserve .DS_Store files, others don’t. The maximum file name lengths are different - sometimes very long file names get truncated on some providers. So I ended up seeing files with different names but the same contents, as evidenced by the same checksum.

You’ll notice that the checksum script is more complex than it needs to be for the exact work described here. It attempts to read both filenames and checksums from the input file, and only checksums the files that didn’t already have a checksum. This is because I initially thought I want to make its work stateful, such that it can store partial checksum state, even if you interrupt its operations. The assumption here was that checksumming takes so long that it might be necessary to run it in multiple sessions. However, modern SSD-s are very fast. On my regular iMac and Macbook Pro, the checksumming takes just a few minutes across tens of thousands of files and tens of gigabytes. So, the final method follows the Unix philosophy, where these tasks are composed of independent stateless operations where one step’s output is next one’s input, and individual steps don’t alter the input state.